China’s AI Regulation: Shaping The Future Of AI

China Regulate AI

On November 30, 2022, OpenAI released ChatGPT, marking a new era of generative AI and the rise of AI technology. Now, half a year later, we have seen tremendous advancements made in various fields thanks to AI. With its rapid development, there’s no doubt we need to enact new laws and guidelines to set the correct and healthy path for future development. As one of the first countries to act on this matter, let’s see how China is planning its AI regulation.

Why Should We Regulate AI?

“Generative AI” is a term to describe AI like ChatGPT, MidJourney, Bard, Bing,... which can generate content such as text, images, video, code, and other media.

What set them apart from old generative technology is that AI doesn’t require much technical skill it can create content fast, precise with just a simple text prompt. It can also mimic human language, speech, and creativity, and produce realistic and diverse outputs.

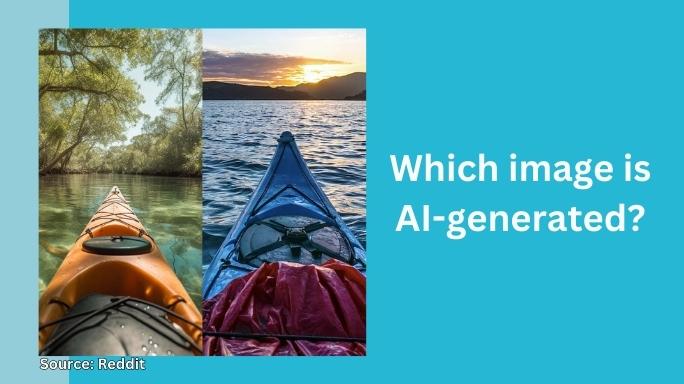

But generative AI is not all fun and games. It can also be very dangerous and harmful. For example, generative AI can be used to make fake news, deep fakes, spam, phishing, malware, and other bad or illegal stuff.

It can also steal your ideas, trick you into believing lies, invade your privacy, break the law, and cause chaos.

Realistic AI Image

And guess what? Generative AI is powered by the Internet data that we all use and share. That means that the companies that make generative AI can access a lot of data that might include your personal or sensitive information. And you don’t want that to happen without your consent, right?

That’s why we need to regulate generative AI and make sure it’s used ethically and responsibly. And that’s exactly what China is doing. China might not be the leader in AI development, but it’s one of the first countries to introduce rules and guidelines for generative AI and its impact on society.

On April 11, 2023, the Cyberspace Administration of China (CAC) issued draft Measures for the Management of Generative Artificial Intelligence Services. These measures are designed to control generative AI services and their providers in China and protect users and society from potential harm.

4 Key Points Of China’s Draft Measures For Generative AI

China has come up with some new rules for generative AI services and their providers. These are the first rules of their kind in China and the world. They aim to make sure that generative AI is used safely and ethically in China.

They cover four main points: what is generative AI, how to use trainning data, what to do and not to do, and what happens if you break the rules.

Definition Of Generative AI Services

The Measures define generative AI services as services that use AI technology to generate content such as text, images, video, code, etc. (Article 2)

These services can be provided through various platforms, such as websites, apps, software, hardware, etc.

Generative AI service providers are the people or organizations that provide generative AI services to users or the public. They can be developers, operators, distributors, or intermediaries of generative AI services.

Legality Of Data

The Measures stipulate that data used to train generative AI models must meet certain requirements for quality, security, and legality. (Article 7)

Data quality means that the data has to be true, accurate, objective, and diverse. Data security means that the data has to be encrypted and protected from unauthorized access or leakage.

Data legality means that the data has to respect intellectual property rights and not contain illegal or harmful content.

Generative AI service providers also have to get the permission of data subjects or owners before using their data for training purposes.

Duties And Obligations

Generative AI service providers have to make sure that the content created by their services follows laws and regulations and respects core socialist values. This means that the content can’t have any information that is illegal, harmful, false, misleading, or infringing on others’ rights or interests.

Generative AI service providers must also require users to register with their real identities and information before using their services. This is to prevent anonymous or malicious use of generative AI services. (Article 9)

Generative AI service providers have to check their services regularly and report any risks or problems to authorities. This is to make sure that their services are safe and reliable and don’t cause any negative effects on users or society.

Generative AI service providers also have to take quick actions to stop or fix any harmful or illegal content created by their services and cooperate with investigations. This is to reduce the damage and liability caused by their services and keep social order and stability.

Consequences Of Violating The Measures

The Measures also specify the consequences of violating the Measures or causing harm to users or society.

If a user is caught using generative AI products to break laws, regulations, or any of the above rules, the service will be stopped or canceled. (Article 19)

Generative AI service providers may face fines, suspension of services, revocation of licenses, or even criminal charges for breaking the rules or causing harm to users or society. The severity of the consequences depends on the type and extent of the violation or harm. (Article 20)

Those who violate any of the above rules will face a fine between 10,000 yuan ($1,414) to 100,000 yuan ($14,141) and a possible crime investigation.

China’s AI regulation vs NA and EU

China is not the only country that is trying to regulate AI and its impacts on society. Other countries, like the EU and the US, are also working on their own rules and policies for AI and its uses. But there are some things that China does differently from them.

Similarities

Both China and other countries agree that regulating AI is important and urgent. They all know that AI is a powerful and changing technology that can help or hurt different areas and sectors. They also realize that AI raises ethical and social questions that need to be answered, such as fairness, accountability, transparency, privacy, security, human dignity, and human rights.

Another thing they have in common is that both China and other countries use a risk-based approach to regulate AI. This means that they focus on regulating AI uses that have big or lasting effects on users or society, such as those related to health, education, employment, justice, public safety, etc. They also try to balance the risks and benefits of AI and make sure it’s used ethically and responsibly.

A third thing they have in common is that both China and other countries support some key principles of trustworthy AI. These include putting humans first, having human oversight, being diverse, inclusive, non-discriminatory, fair, transparent, explainable, private, secure, reliable, robust, accountable, etc. These principles are shown in various documents and initiatives from both sides, such as the Beijing AI Principles., the EU Ethics Guidelines for Trustworthy AI, and the US National Artificial Intelligence Initiative Act.

Differences

One way they are different is that China has a more proactive and comprehensive approach to regulating AI than other countries, which tend to be more reactive and fragmented.

China has made several laws, regulations, standards, and guidelines to cover different aspects of AI creation and use, such as data protection, personal information protection, cybersecurity, recommendation algorithms, deep synthesis technologies, generative AI services, etc.

China has also set up a clear leadership and coordination system for AI governance, led by the CAC and involving multiple ministries and agencies. China has also made AI regulation part of its national strategy and vision for AI creation.

Another way they are different is that other countries have a more reactive and fragmented approach to regulating AI than China, which tends to be more proactive and comprehensive.

While the US or EU have not yet made a unified or consistent framework or policy for AI regulation, but rather use existing or sector-specific laws or regulations that may not fully deal with the specialties or challenges of AI.

They also have more decentralized or diverse authorities or stakeholders involved in AI governance, which may create gaps or conflicts in oversight or enforcement. Other countries also have more varied or competing interests or values in AI creation and use, which may make cooperation or alignment harder.

Trade-offs Between Trust And Innovation

China is trying to keep up with the fast growth of AI technology by having some clear and strict rules. But it’s not all good.

On one hand, China’s rules can make AI more trustworthy and responsible. They can also make AI more ethical and safe and can help China become a big player in the world of AI.

But on the other hand, China’s rules can also slow down AI development and make it more expensive. They can also limit the variety and creativity of AI. And they can cause problems or disagreements with other countries’ rules. Plus, they can make people wonder or complain about what China really wants or believes in with its AI regulation.

China is one of the first countries to try to find a balance between the opportunities and the challenges of AI. But it’s not the only way or the best way. Soon, we might see other countries make their own regulation, especially after Italy banned ChatGPT.

Conclusion

China’s AI regulation is a brave and smart move that shows its leadership and vision in AI development and innovation. But it also brings up some questions and challenges for China’s AI industry and innovation as well as other countries’ AI policies and practices. How will China balance the trade-offs between regulation and innovation? How will China work with other countries, especially the EU and the US, on AI regulation? How will China deal with the ethical and social issues that generative AI and other AI applications cause?

These are not easy questions to answer. And these are not final answers either. The draft Measures are still new and they need a lot of reviews and feedback from different people. They also need to be tried and improved in practice. The goal is to find a way to control the development of AI without affecting both the society and the technological advances.