7 ChatGPT Alternatives That Can Run Locally

What Are The Best Local ChatGPT Alternatives

While ChatGPT has undoubtedly revolutionized natural language processing with its powerful capabilities, it is always prudent to explore other options that offer similar functionalities without relying on external servers or internet connectivity.

In this article, we will dive into the world of self-contained ChatGPT alternatives that can run locally. These 7 intelligent chatbots promise to empower you with the ability to generate human-like text responses right from the comfort of your own machine.

Why Use A Local Chatbot?

It stands to reason that the ChatGPT offline version has witnessed increasing downloads this year. It is high time you turned to using local chatbots for the sake of:

- Data Privacy

With a local chatbot, all data and interactions remain on your local machine or network. This provides greater control over sensitive information and ensures that user data is not shared with external servers or cloud services.

- Reduced Latency

Local chatbots operate directly on your machine, eliminating the need to send user queries to remote servers and wait for responses. This results in faster response times, making the user experience more seamless and interactive.

- Offline Operation

Local chatbots do not require an internet connection to function. This is particularly useful in situations where internet connectivity is limited, unstable, or not available at all.

Offline operation allows continuous access to the chatbot's functionality regardless of internet access.

- Customization and Control

By running a chatbot locally, you have complete control over its behavior, responses, and underlying models.

You can customize the chatbot to suit your specific requirements, integrate it with other local applications, and tailor its functionality to meet your unique needs.

- Compliance and Security

Some industries and organizations have strict compliance requirements that necessitate keeping data and operations within a specific network or environment.

Local chatbots offer a solution for maintaining compliance and ensuring that sensitive data remains within the designated network.

ChatGPT Alternatives That Can Run Locally

While there are tons of free alternatives to ChatGPT out there, not many options can be used on a local PC. The seven AI language models below are game-changers, giving you a chatbot similar to ChatGPT to play with at your own comfort.

Raven RWKV

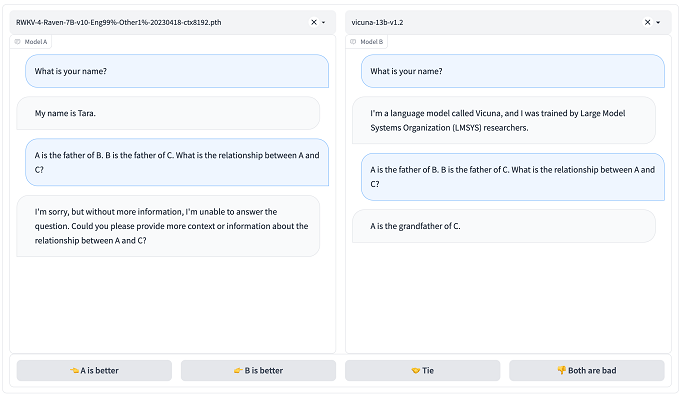

Raven RWKV Has A Faster Processing Speed Than ChatGPT

Raven RWKV is a conversational AI tool developed by BlinkDL that could be the perfect fit for your requirements.

Powered by the RWKV language model, which utilizes Recurrent Neural Networks (RNNs), this chatbot delivers impressive results comparable to ChatGPT, while boasting enhanced processing speed and lower hardware demands.

RWKV combines the strengths of RNN and transformer architectures, delivering exceptional performance, swift inference, reduced VRAM usage, accelerated training, extended context length, and even includes free sentence embedding.

Raven RWKV has undergone fine-tuning on diverse datasets such as Stanford Alpaca and code-alpaca, ensuring outstanding performance across a wide range of conversation topics.

Notably, it achieves equivalent quality and scalability to transformer models while consuming less VRAM.

If you are seeking a local ChatGPT chatbot alternative that strikes a balance between resource efficiency and performance, the Raven RWKV chatbot is an excellent choice.

Vicuna

Another ChatGPT-like language model that can run locally is a collaboration between UC Berkeley, Carnegie Mellon University, Stanford, and UC San Diego - Vicuna. This is an instruction-following Language Model (LLM) based on LLaMA.

Vicuna is available in two sizes, boasting either 7 billion or 13 billion parameters.

To fine-tune Vicuna, the researchers leveraged the training code from Alpaca and incorporated approximately 70,000 examples sourced from ShareGPT, a platform where users can share their interactions with ChatGPT.

Notably, the training process underwent enhancements to accommodate longer conversation contexts.

Preliminary evaluations have demonstrated Vicuna's superiority over LLaMA and Alpaca, positioning it closely alongside Bard and ChatGPT-4 in terms of performance.

An intriguing online demo is also accessible, allowing users to test and compare Vicuna with other open-source instruction LLMs.

It's important to note that Vicuna's online demo is currently provided as a "research preview intended for non-commercial use only." To deploy your own model, you will need to obtain the LLaMA instance from Meta and subsequently apply the weight deltas to it.

LLaMA

This Text Generator Can Function As A Chatbot

Initially designed as a text generator, LLaMA has proven its competence as a chatbot as well. This versatile language model has undergone extensive pre-training on a vast corpus of internet texts and subsequent fine-tuning to deliver accurate and intelligent responses.

To leverage LLaMA as a substitute to ChatGPT, intermediate-level programming skills are necessary, and a robust hardware setup, including a powerful GPU, is crucial. Fortunately, you have the option to run the LLaMa-13b model directly on your local machine.

In terms of natural language processing performance, LLaMa-13b demonstrates remarkable capabilities.

It ventures into generating content such as poetry and stories, akin to the ChatGPT, GPT-3, and GPT-4 models developed by OpenAI. However, its behavior is influenced by the specific tuning applied during the setup process.

LLaMA supports multiple languages, ensuring a bilingual or even multilingual experience for users. However, if your primary focus lies within a particular language, alternative options like Jasper Chat could be worth considering.

Keep in mind, though, that utilizing LLaMA may involve more coding and technical expertise compared to some other alternatives.

Alpaca

In March, Stanford researchers introduced Alpaca, an instruction-following Language Model (LLM) built upon LLaMA 7B. The team fine-tuned the LLaMA model using a dataset comprising 52,000 instruction-following examples derived from InstructGPT.

To accomplish this, the researchers employed a technique called self-instruction. Initially, a small seed of human-written examples containing instructions and corresponding outputs was provided.

Preliminary experiments indicate that Alpaca performs at a comparable level to InstructGPT.

The Stanford researchers have made the complete self-instruction dataset available, along with detailed information about the data generation process and the associated code for data generation and model fine-tuning.

However, it is important to note that Alpaca, being based on LLaMA, adheres to the same licensing rules as its base model and is solely intended for academic research, prohibiting any commercial usage.

Moreover, since the researchers utilized InstructGPT in generating the fine-tuning data, they are bound by OpenAI's terms of use, which prohibit the development of models that compete with OpenAI.

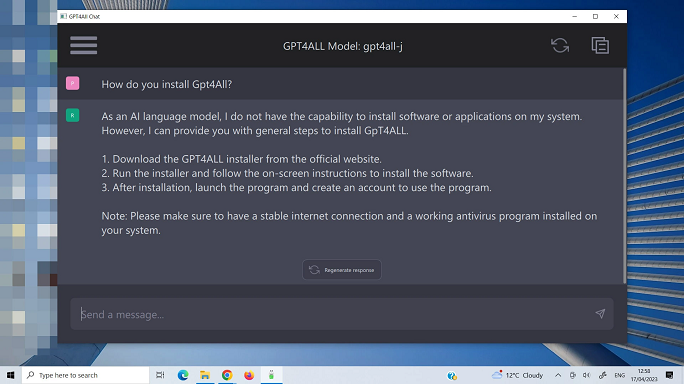

GPT4All

ChatGPT4All Is A Helpful Local Chatbot

GPT4ALL, developed by the Nomic AI Team, is an innovative chatbot trained on a vast collection of carefully curated data encompassing various forms of assisted interaction, including word problems, code snippets, stories, depictions, and multi-turn dialogues.

The underlying GPT-4 model utilizes a technique called pre-training, which involves exposing the model to extensive amounts of text from diverse sources such as books, articles, and web pages.

This pre-training enables the model to learn the structures and patterns of language, thereby enabling it to generate text based on the input it receives from users.

GPT4ALL offers a comprehensive set of features to enhance user experience.

It includes a Python client for seamless integration, support for both GPU and CPU interference, Typescript bindings for flexible implementation, a user-friendly chat interface, and a robust Langchain backend.

However, it is essential to acknowledge that while GPT-4 represents a significant advancement in language models, it does have certain limitations.

OpenAI recognizes that issues such as social biases, hallucinations, and vulnerabilities to adversarial prompts still exist. The company is actively working to address these challenges and improve the overall performance of the model.

KoboldAI

The next ChatGPT-style chatbot worth considering is KoboldAI. This open-source AI-powered chatbot excels at generating human-like text based on your prompts, offering a compelling conversational experience.

One of the standout features of KoboldAI is its user-friendly interface, designed to keep things simple and clutter-free. While the initial setup may involve a few steps, the GitHub page provides clear and comprehensive instructions, making the process hassle-free.

We have to say that the README page accompanying KoboldAI's GitHub repository is truly exceptional, offering one of the most thorough and well-documented resources we've come across in the AI community.

Under the hood, KoboldAI harnesses the power of various pre-trained models, granting you the flexibility to find the ideal balance between performance and text quality.

You can experiment with different models to discover which one best suits your specific requirements.

KoboldAI also scores a great advantage thanks to its focus on continuous improvement. The active community of developers and users ensures that updates and bug fixes are promptly addressed, guaranteeing a chatbot experience that evolves and becomes better over time.

Dolly

Software Engineers Can Use This ChatGPT Like Tool To Improve Their Language Model

In March, Databricks introduced Dolly, a refined version of EleutherAI's GPT-J 6B model.

The researchers drew inspiration from the accomplishments of the LLaMA and Alpaca teams. Remarkably, training Dolly took only 30 minutes on a single machine and cost less than $30.

By utilizing the EleutherAI base model, Databricks overcame the limitations imposed by Meta on LLaMA-derived Language Models (LLMs).

In April, the Databricks team unveiled Dolly 2.0, an enhanced model boasting 12 billion parameters based on EleutherAI's pythia model.

For this iteration, Databricks fine-tuned the model using a dataset of 15,000 instruction-following examples exclusively generated by human annotators.

Databricks has released the trained Dolly 2 model, which is free from the limitations of its predecessors, allowing users to leverage it for commercial purposes.

Furthermore, they have made the 15,000-example instruction-following corpus, used to fine-tune the pythia model, publicly available. Machine learning engineers can now employ this corpus to fine-tune their own Language Models (LLMs).

Databricks' contributions with Dolly and Dolly 2 signify important advancements in the field, offering accessible and capable models while also providing valuable resources for further research and development within the machine learning community.

Bottom Line

Above are seven ChatGPT alternatives that can run locally. These “ChatGPT clones” provide users with diverse options for achieving their chatbot goals.

Embracing local chatbots will open up new gateways to privacy-conscious, efficient, and customizable conversational AI experiences. Why say “no” to these fantastic benefits, right